With advancements in electric vehicle (EV) technology, the power density of drive motors has significantly increased, intensifying the challenge of effective thermal management in electric drive systems. Among available cooling methods, oil cooling has emerged as a promising solution because cooling oil is non-conductive and non-magnetic, allowing it to come into direct contact with high-heat-generating components, such as stator windings, to dissipate heat efficiently. This makes it a critical medium for next-generation EV powertrain systems.

Research into oil-cooled motor systems is therefore vital for improving thermal performance and reliability in electric vehicles. However, in direct oil-cooled motor configurations, the coolant lacks fixed flow channels, and the convective heat transfer coefficient between the oil and the motor components is typically determined empirically. This introduces uncertainties in simulation accuracy, often resulting in deviations between numerical predictions and experimental observations.

To address this challenge, the present study employs the multi-objective optimization package pymoo to calibrate an automotive oil-cooled motor model developed using the CAE software shonTA. The optimization performance of two algorithms, Non-Dominated Sorting Genetic Algorithm II (NSGA-II) and NSGA-III, is systematically evaluated to determine their efficacy in improving model fidelity and predictive accuracy.

1. Mathematical Definition of Multi-Objective Optimization Problems

In pymoo, a multi-objective optimization problem is mathematically formulated as follows:

subject to:

and bounds:

Here, represents the objective function vector. In cases where an objective requires maximization, it can be equivalently reformulated as a minimization problem by negating the objective function.

The functions and denote the sets of inequality and equality constraints, respectively. Not all optimization problems necessarily involve constraints; the presence and type of constraints depend on the specific problem formulation. Notably, satisfying equality constraints is typically more challenging than handling inequality constraints.

Finally, and represent the lower and upper bounds, defining the feasible search space for the optimization problem.

2. Pymoo Multi-Objective Optimization Algorithms

Pymoo provides a wide array of optimization algorithms, detailed on the pymoo official website (pymoo.org). This blog selects the multi-objective algorithms NSGA-II and NSGA-III for investigation.

NSGA Algorithms: Non-dominated Sorting Genetic Algorithms. Compared to simple genetic algorithms, NSGA employs a non-dominated hierarchical approach, enabling superior individuals to have a greater chance of being passed on to the next generation.

NSGA-II Algorithm: “Non-dominated Sorting Genetic Algorithm with Elite Strategy,” an enhanced version of NSGA. NSGA-II uses an “elite strategy” in which parent and offspring populations compete to produce the next generation, preventing the loss of high-quality individuals. Additionally, the “elite strategy” accelerates algorithm execution speed.

NSGA-III Algorithm: The “Reference Point-Based Non-Dominated Genetic Algorithm,” an enhanced version of NSGA-II.

NSGA-III outperforms NSGA-II in ensuring solution diversity. NSGA-II selects superior solutions as “parents” for generating the next generation based on non-dominated sorting and crowding distance. NSGA-III introduces the concept of reference points. During selection operations, it ranks solutions based on their performance relative to these reference points, ensuring a more uniformly distributed solution set. This approach better handles complex multi-objective optimization problems and offers advantages for large-scale problems.

However, NSGA-III’s increased computational overhead in selection operations results in relatively higher algorithmic complexity. Overall, both NSGA-II and NSGA-III are excellent multi-objective optimization algorithms suited for different problem types. NSGA-III excels in solution diversity and is ideal for complex multi-objective problems, while NSGA-II is relatively simpler and suitable for general multi-objective optimization tasks. The choice between them depends on the problem’s nature and scale. Generally, NSGA-II is recommended for applications with two optimization objectives, whereas NSGA-III is preferred for high-dimensional applications involving three or more objectives.

3. Calibration Process Overview

3.1 shonTA Software Modelling

shonTA is a three-dimensional thermal analysis and simulation software that integrates both thermal network and finite element methods (FEM). It supports multiple modes of heat transfer, including conduction, convection, and radiation.

The software is primarily used for three-dimensional thermal equilibrium analyses of transmission components, such as electric motors, gearboxes, and reducers, enabling accurate predictions of temperature distributions and thermal performance under various operating conditions.

For this study, shonTA was utilized to construct a detailed motor thermal model. Upon completing the model setup, the software generates a control data file (data.json) that encapsulates the full motor simulation parameters and configuration. This exported file serves as the basis for subsequent parameter optimization, particularly for refining thermal parameters such as the convective heat transfer coefficient.

3.2 Python Main Function Code

Python scripts orchestrate the calibration process, coupling shonTA simulations with pymoo’s NSGA-II and NSGA-III algorithms. The calibration variables include convective heat transfer coefficients at the motor end covers.

Table 1: Python Main Program Code Steps

| Steps | Description |

|---|---|

| Step 1 | Set the calibration parameters in the controldata.json file to a modifiable state to facilitate the subsequent optimization search; save the reference values in CSV format for reading by the following steps. |

| Step 2 | Define the shonTA external solver interface function, enabling the main program to call the shonTA solver for model computation; this function also reads the result file and compares it against the reference values to obtain the objective function . |

| Step 3 | Define the multi-objective optimization problem, where the results of the objective function are output by the external solver interface defined in Step 2. |

| Step 4 | Perform optimization using the pymoo optimization algorithm; the optimization problem used by the algorithm is defined in Step 3. |

| Step 5 | Output the optimization results. |

For further information about shonTA software and the Python code contact us.

4. Results and Discussion

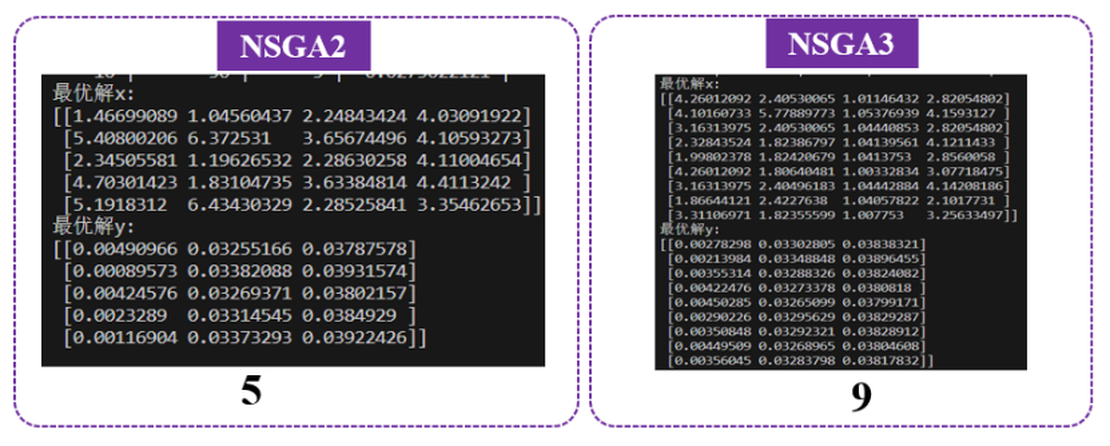

In this study, the axial and radial convective heat transfer coefficients at the left and right end covers of the motor were selected as calibration parameters, yielding a total of 4 optimization variables. To evaluate the thermal performance of the model, three temperature-difference points, corresponding to the permanent magnet, winding, and stator regions of the motor, were defined as the optimization objectives.

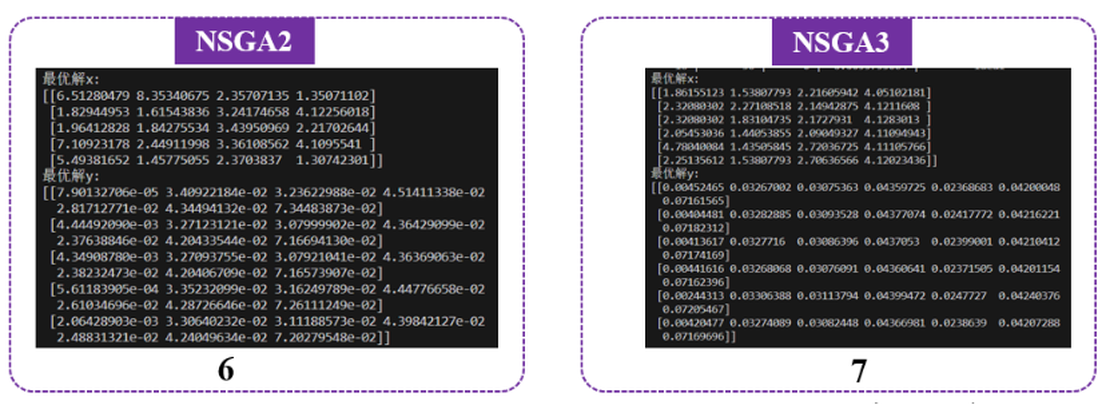

The optimization outcomes obtained using the NSGA-II and NSGA-III algorithms are illustrated in Figure 2. The NSGA-II algorithm converged to five local optimal solutions. In contrast, the NSGA-III algorithm identified nine local optima, demonstrating its enhanced ability to explore a broader range of Pareto-optimal fronts in multi-objective search spaces.

Additional optimization objectives were introduced by selecting temperature differences at seven specific locations: two points on the permanent magnet, three points on the windings, and two points on the stator components. The optimization results from the NSGA-II and NSGA-III algorithms are shown in Figure 3. The NSGA-II algorithm identified six local optima, while the NSGA-III algorithm identified seven local optima.

The comparative results indicate that NSGA-III identifies a greater number of optimal solutions than NSGA-II, making it more effective for high-dimensional multi-objective optimization problems. Based on detailed analysis, this performance improvement can be attributed primarily to two key mechanisms: population initialization and reference point utilization.

Population Initialization

NSGA-III employs a uniform population initialization strategy using a predefined set of uniformly distributed reference points. These reference points approximate locations on the ideal Pareto front, ensuring that the initial population is broadly and evenly distributed across the search space. This approach enhances diversity and reduces the likelihood of premature convergence.

In contrast, NSGA-II typically relies on random initialization, which can lead to clustering of initial individuals around certain regions of the objective space, thereby reducing search efficiency and increasing the risk of stagnation near local optima.

Reference Point Utilization

During the optimization process, NSGA-III leverages reference points to normalize and decompose multi-objective functions into a series of single-objective subproblems. This transformation facilitates more uniform exploration of the Pareto frontier and helps maintain population diversity throughout the evolutionary process.

NSGA-II, on the other hand, employs non-dominated sorting and crowding distance mechanisms to preserve diversity. While effective for problems with fewer objectives, these methods are less capable of maintaining diversity in high-dimensional and complex optimization scenarios.

In summary, through its enhanced population initialization strategy and systematic use of reference points, NSGA-III achieves superior convergence on the Pareto front while maintaining population diversity, making it more robust and scalable for solving complex multi-objective optimization problems compared to NSGA-II.

5. Outlook

The internal cooling oil circuit of automotive oil-cooled motors is complex. Parameters that require calibration and exert the most significant influence on overall temperature need to be explored. Additionally, further refinement and investigation into other multi-objective optimization algorithms are needed to enhance the optimization process.

References:

[1] pymoo.org

[2] Davin, T. et al. (2015). Experimental study of oil cooling systems for electric motors. Applied Thermal Engineering, 75, 1–13.

[3] Zhang, X. et al. (2015). An Efficient Approach to Non-dominated Sorting for Evolutionary Multi-objective Optimization. IEEE Transactions on Evolutionary Computation.